Can you trust your eyes when 8 million synthetic files flood the internet annually? By 2026, deepfake fraud attempts have spiked by over 3,000%, making visual “proof” a massive liability for US businesses. To fight back, visual AI has pivoted from simple generation to rigorous, automated verification.

Enterprise-grade reliability now depends on “AI-as-a-Judge” systems and MLOps infrastructure. These tools replace subjective “vibe checks” with high-dimensional benchmarks to catch “uncanny valley” artifacts. Adopting these automated QA frameworks is no longer optional—it is a baseline for security.

Table of Contents

Key Takeaways:

- Automated QA is mandatory: Deepfake fraud attempts have spiked over 3,000% by 2026, making “AI-as-a-Judge” MLOps systems a security baseline for visual content.

- Global Copyright Divergence & EU Regulation: The EU AI Act, operational August 2, 2026, mandates machine-readable marking (C2PA) and imposes fines up to €15 million or 3% of global turnover.

- Advanced Deepfake Detection: New tools like SSTGNN and DiffusionQC detect “Uncanny Valley” artifacts, focusing on biological tells or latent space anomalies to catch “Zero-Day” fakes.

- Agentic Judges Scale QA: “Agent-as-a-Judge” systems can achieve a 95% human-alignment and slash evaluation costs by 97% using multi-agent debate and forensic tools.

How is QA Automated for Generative Video?

As IT managers and creative leads scale generative systems in 2026, copyright compliance has shifted from a legal abstract to a primary operational constraint. The landscape is defined by a jurisdictional divide regarding authorship and a move toward strict transparency under the EU AI Act.

Authorship and Ownership: The “Human Touch” Requirement

A critical question for 2026 is whether a company owns the copyright to AI-generated code, images, or video. The global consensus has diverged into two distinct legal frameworks:

1. The Human Authorship Standard (US, EU, China)

In these jurisdictions, work generated solely by AI remains in the public domain. To claim ownership, a user must prove “substantial creative control.”

- The “Zarya” Precedent: In 2026, the US Copyright Office continues to follow the Zarya of the Dawn ruling: you can copyright the arrangement and story of an AI-assisted work, but the raw AI images themselves are often ineligible.

- Documentation as Defense: Modern 2026 workflows now include Creative Process Records. IT teams are using MLOps tools (like W&B Weave) to log every prompt iteration and manual edit. If you can show a “recursive refinement” process—where a human rejected ten versions and manually edited the eleventh—the legal claim for authorship is significantly strengthened.

2. The “Arrangements” Standard (UK, NZ, Ireland)

The UK remains a notable outlier, still recognizing “computer-generated works” where no human author exists.

- Ownership: Rights are assigned to the person who made the “arrangements necessary” for the work’s creation (e.g., the person who commissioned the model or designed the prompt architecture).

- 2026 Shift: Be aware that as of early 2026, the UK government is debating abolishing this protection to align with the US and EU, potentially moving toward a human-only authorship model by late 2027.

| Jurisdiction | Copyright Eligibility | Key 2026 Requirement |

| United States | Significant human input only | Log of prompts and manual edits. |

| European Union | “Author’s own intellectual creation” | C2PA-compliant machine labeling. |

| United Kingdom | Eligible without human author | Proof of “necessary arrangements.” |

| China | Originality-based | Evidence of “aesthetic choice.” |

What are the EU AI Act’s 2026 requirements?

The EU AI Act (Regulation 2024/1689) becomes fully operational on August 2, 2026. Failure to comply can result in administrative fines of up to €15 million or 3% of global turnover.

- Training Data Summaries: GPAI providers (e.g., OpenAI, Anthropic) must publish a detailed summary of their training content. The EU AI Office released the final “Public Summary Template” in Q2 2026, requiring disclosure of data sources (top domain names), data size (tokens/images), and processing methods.

- Mandatory Marking (Article 50): Every piece of AI-generated content published in the EU must be marked as synthetic in a machine-readable format. In 2026, this has effectively made C2PA metadata and invisible watermarking (like SynthID) mandatory for enterprise platforms.

- Copyright Opt-Outs: Developers must respect “Reservation of Rights” expressed via technical protocols.

What’s changing in Web Crawling and Opt-Outs?

The “Fair Use” status of training data remains a legal gray area. To mitigate regulatory pressure, Google and other major crawlers have introduced more granular controls:

- Separated Opt-Outs: As of January 2026, Google is testing updates to Google-Extended. This allows publishers to opt out of AI Overviews (Search generative features) without being delisted from traditional Google Search results.

- The “Liar’s Dividend”: This regulatory shift has created a paradoxical challenge for IT managers: while you can opt out of new training, your existing public data may already be embedded in 2025-era models.

Enterprise Risk Strategy

- Indemnification Carve-outs: Most “Copyright Commitments” (from Microsoft, Adobe, etc.) are void if you intentionally prompt the AI to mimic a specific artist’s style or bypass safety filters.

- Internal Data Hygiene: Employees pasting confidential code into public, non-enterprise AI is categorized as an ethical violation and a trade-secret leak in 2026. Use Enterprise-only instances where data is “frozen” and not used for training.

- Audit Logs: Implement Governance-as-Code. Ensure every AI-generated asset used in a commercial campaign is linked to an immutable log of the human’s creative choices to support future copyright filings.

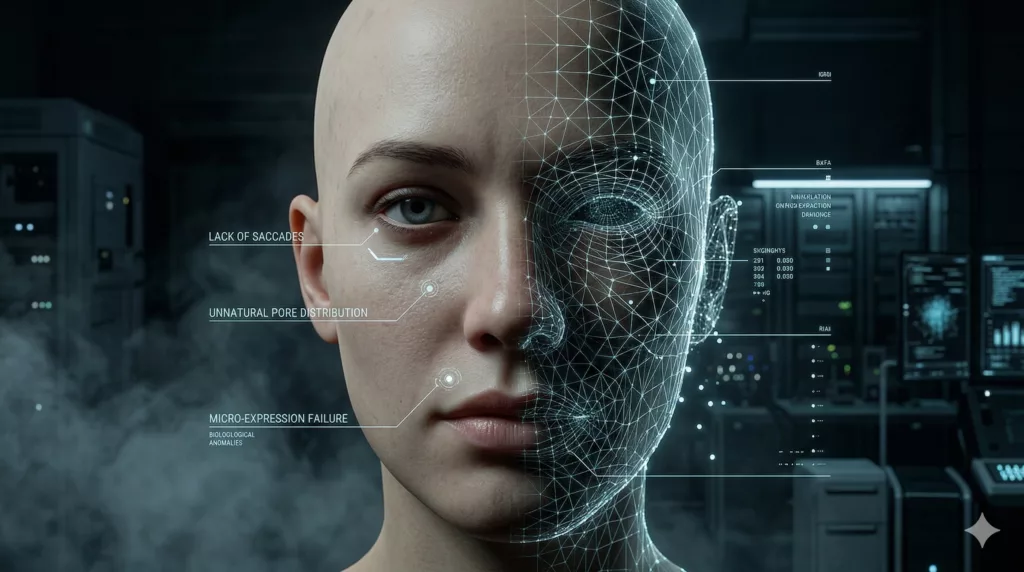

How do we detect Uncanny Valley artifacts?

In 2026, the Uncanny Valley is no longer just a psychological theory; it is a quantifiable engineering challenge. As synthetic humans move from 95% to 99% realism, the brain’s Action Perception System (APS)—specifically the mirror neuron network—becomes more sensitive to the “mismatch” between appearance and biological motion.

The Neural Basis: Mirror Neurons and Prediction Error

Research in 2026 highlights that “uncanniness” is often caused by Prediction Error in the brain’s sensorimotor cortex.

- The Conflict: When an AI avatar looks perfectly human but moves with subtle geometric rigidity, the observer’s mirror neurons fire to simulate the action, but the visual input fails to match the neural expectation.

- The Amygdala Response: This mismatch triggers a subconscious revulsion response. Modern 2026 detection tools now use Neuro-Symbolic AI to simulate this “human discomfort” by measuring high-dimensional distance metrics like Frechet Inception Distance (FID) and Kernel Inception Distance (KID), which correlate strongly with human unease.

Humanity Markers: Detecting the “Dead Eye” and “Hollow” Smile

To identify synthetic humans, 2026 forensic platforms focus on three biological “tells” that remain difficult for even Blackwell-class generative models to master:

- Facial Action Unit (AU) Synchronization: Authentic smiles involve the perfect orchestration of the eyes (orbicularis oculi) and the mouth (zygomatic major). AI often fails this sync, creating the “dead eye” effect where the eyes remain static while the mouth moves.

- Micro-Expression Fluidity: Detectors now use CNN-LSTM architectures to analyze the temporal transitions of micro-expressions. Real human emotions ripple across the face in waves; AI expressions often “teleport” or snap between states too abruptly.

- Speech-Motion Coordination (AVSFF): The Audio-Visual Synchronization and Fusion Framework (AVSFF) has become the 2026 standard for detecting deepfakes. It analyzes the fine-grained relationship between the tongue, teeth, and jaw during speech. If the “spectral-temporal” alignment of the audio doesn’t match the physical mechanics of the throat and mouth, the asset is flagged.

Technical Verification & Forensic Toolkit

The “Toolkit for Truth” in 2026 combines classic physics-based analysis with deep learning forensic indicators:

| Detection Method | Target Artifact | Technical Implementation |

| Vanishing Point Analysis | Perspective Violations | Verifies that parallel lines (e.g., in buildings) converge at a single horizon point. |

| Shadow & Reflection Trace | Physics Inconsistency | Confirms shadows point to a single light source and reflections meet surfaces at correct angles. |

| Pixel Texture Check | Mathematical Uniformity | Magnifies skin/sky at 100% to find “natural chaos” vs. AI’s suspiciously uniform patterns. |

| Biological Signal (rPPG) | Pulse & Blood Flow | Analyzes subtle skin color changes (remote photoplethysmography) to detect a heartbeat. |

| C2PA Provenance | Authenticity History | Checks for cryptographically signed Content Credentials via the C2PA Verify Tool. |

Industry Standards: TrueMedia and C2PA

By 2026, newsrooms have standardized on TrueMedia.org (now powered by Georgetown University) as a primary filter. TrueMedia aggregates dozens of specialized detectors—such as DistilDIRE for real-time reconstruction and FTCN for temporal coherence—to provide a “Total Authenticity Score.”

Any asset with a forensic probability of forgery above 70% triggers a mandatory human-in-the-loop review, which focuses on the specific “humanity markers” identified by the AI, such as unnatural eye-tracking or “mathematically perfect” skin textures.

How are Motion and Temporal Consistency Achieved?

In 2026, the industry has shifted from simple “visual fidelity” to Motion Coherence (the physical logic of movement) and Temporal Consistency (the stability of identity and lighting over time). As models move toward “World Model” status, the benchmark for success is no longer just a smooth frame, but an understanding of cause-and-effect and structural integrity.

Detecting Artifacts: The SSTGNN Framework

Temporal flickering—rapid, unintended changes in pixel intensity or texture—remains a core hurdle for diffusion models. The SSTGNN (Spatial-Spectral-Temporal Graph Neural Network) framework has emerged as the 2026 standard for diagnosing these failures in real-time.

- Graph-Based Modeling: SSTGNN treats video patches as nodes in a dynamic graph. By using negatively weighted edges, it calculates Spatial-Temporal Differentials—identifying sharp, non-physical changes between corresponding frames.

- Spectral Filtering: The framework applies learnable spectral filters to detect anomalies in the frequency domain. This is particularly effective at catching “denoising artifacts” that are invisible to the eye but result in a subconscious “digital feel.”

- Lightweight Efficiency: Despite its complexity, SSTGNN is optimized for production, using up to 42x fewer parameters than previous state-of-the-art detection models, allowing it to run as a real-time monitor on inference nodes.

Structural Integrity: The MoSA Framework

To eliminate “melted limbs” and “ghosting,” 2026 pipelines utilize the MoSA (Motion-coherent Structure-Appearance) framework. This approach fundamentally changes how video is generated by decoupling physics from aesthetics.

- Stage 1: Structure Generation: A 3D structure transformer, pretrained on massive motion datasets, generates a sequence of 3D keypoints from a text prompt. This ensures the “skeleton” follows the laws of physics before a single pixel is drawn.

- Stage 2: Appearance Synthesis: The visual skin, clothing, and background are “shrink-wrapped” onto the structural skeleton. Human-Aware Dynamic Control modules use dense tracking constraints to ensure that clothing and textures don’t “slip” or change identity during fast movements.

2026 Benchmarks: Beyond FVD

While Fréchet Video Distance (FVD) provides a coarse measure of visual similarity to real video, the 2026 MLOps stack relies on the VBench 2.0 suite for granular diagnostics.

| Dimension | 2026 Metric Goal | Diagnostic Objective |

| Temporal Flickering | {Score} > 0.95 | Measure pixel intensity stability across 5s+ clips. |

| Motion Smoothness | {L1 Error} < 0.05 | Detect jitter using AMT (All-around Motion Transformer). |

| Dynamic Degree | High | Ensure natural intensity of movement (Optical Flow norm). |

| Subject Consistency | DINOv2 Match | Verify the character’s face/clothing doesn’t “morph” mid-scene. |

| Physical Plausibility | VBench-2.0 | Check for gravity, collision, and anatomical violations. |

The “World Model” Transition

By late 2026, the focus has moved from Perceptual Verisimilitude (looks real) to Intrinsic Faithfulness (acts real). Models like Runway Gen-3.5 and Google Veo-XL are now evaluated on their ability to handle complex human-environment interactions—such as a character picking up an object—without the object disappearing or the hand warping.

How do AI Judges assess Visual Fidelity?

As we move through 2026, the volume of synthetic media has rendered human-only review obsolete for production scaling. The industry has pivoted to “Agent-as-a-Judge” frameworks—multimodal systems that don’t just “score” an image, but actively investigate it using specialized tools, temporal memory, and reasoning traces.

The Agentic Paradigm Shift

Unlike traditional “LLM-as-a-Judge” models that act as black-box critics, an Agent-as-a-Judge is an active participant in the evaluation pipeline.

- Trajectory Analysis: The agent observes the entire generation “trajectory.” In video, it can pinpoint the exact frame where a character’s identity drifted or where an occlusion failed, providing intermediate feedback that helps developers debug the latent decoder directly.

- Tool-Augmented Verification: These agents utilize external tools—such as SSTGNN for spectral anomaly detection or rPPG for pulse-sign detection—to verify physical and biological plausibility.

- Multi-Agent Debate: State-of-the-art frameworks (e.g., TopicalChat-2026) employ “discussion panels” where multiple agents (a critic, a defender, and a domain expert) debate the output. This approach has reached a Kendall Tau correlation of 0.57 with human experts, significantly higher than single-pass GPT-4o evaluators.

Establishing a Reliable Evaluation Rubric

In 2026, professional MLOps teams have abandoned 1–10 Likert scales to avoid “mean-reversion” (where scores cluster at 7). Instead, they use discrete, actionable categories.

| Quality Category | Criteria for AI Video | Technical Indicator |

| Fully Correct | Perfect physics; consistent lighting; no flickering. | Zero detected spectral anomalies in SSTGNN. |

| Incomplete | High visual quality but missing prompt elements. | Fails alignment in T2V-CompBench. |

| Contradictory | Violates physical laws (e.g., clipping). | High outlier score in DiffusionQC. |

| Uncanny/Fail | Triggers revulsion or identity drift. | Amygdala-aligned revulsion score $> 0.8$. |

Best Practices: The 2026 Alignment Check

To ensure the AI judge scales human expertise rather than inventing its own, the following “Alignment Check” is now standard protocol:

- Manual Ground-Truthing: Developers grade a “Golden Set” of at least 50–100 outputs manually. If human annotators cannot agree on a grade, the rubric is considered flawed and must be rewritten.

- Anchor Examples (Few-Shot): The judge’s prompt includes “Anchors”—pre-graded samples of a “Fully Correct” vs. a “Contradictory” output.

- Chain-of-Thought (CoT): The judge is instructed to analyze step-by-step (e.g., “1. Check background stability; 2. Verify hand geometry”) before providing a final label. This creates a valuable audit trail for debugging.

- Temperature Zero: For absolute consistency, judges are run at a temperature of $0$, ensuring that the same video receives the same grade every time it is evaluated.

The 2026 Bottom Line: We are no longer asking if a video “looks good.” We are using agentic systems to verify if it “is right.” By 2026, this “Agentic Flywheel” allows startups to achieve 95% human-alignment while reducing evaluation costs by 97%.

What is Latent Space Artifact Detection?

In 2026, the detection of synthetic media has moved beyond analyzing final pixels to inspecting the Latent Space—the mathematical foundation where synthesis occurs. This “Inside-Out” forensic approach allows for the identification of artifacts and the embedding of provenance before an image or video is even rendered.

Latent Space Watermarking: DistSeal

Traditional pixel-space watermarking is computationally heavy and easily stripped. DistSeal, a framework popularized in early 2026, shifts the watermark injection directly into the latent representations of diffusion and autoregressive models.

- In-Model Distillation: DistSeal trains a post-hoc latent watermarker and then “distills” it directly into the generator’s weights or the latent decoder. This ensures the watermark is “born” with the image, making it nearly impossible to remove without destroying the asset.

- Performance: Because it operates on compressed latent tensors (e.g., $64 \times 64$ for a $512 \times 512$ image), it achieves a 20x speedup over traditional methods, adding negligible latency to high-throughput AI pipelines.

- Robustness: Distilled latent watermarks have shown superior resilience against “anti-forensic” attacks, such as aggressive re-compression or noise injection, compared to their pixel-space predecessors.

Outlier Detection with DiffusionQC

Rather than looking for a specific “list of fakes,” DiffusionQC treats deepfake detection as an anomaly detection problem.

- The Natural Manifold: The system is built on a diffusion model trained exclusively on high-fidelity, organic data.

- Manifold Deviation: When a synthetic image is processed, DiffusionQC attempts to “purify” or reconstruct it within its learned manifold of real images. If the reconstruction error (the distance between the input and the purified manifold) exceeds a specific threshold, it is flagged as synthetic.

- Zero-Day Detection: This “undirected” approach is highly effective against novel generative models (e.g., a new 2026 architecture) because it doesn’t need to know what the “new fake” looks like; it only needs to know what “real” looks like.

Mechanistic Interpretability and ‘Forensic Axes’

In a major 2026 breakthrough, researchers began using Sparse Autoencoders (SAEs) to perform a “mechanistic autopsy” on deepfake detectors. This has transformed detectors from black boxes into interpretable forensic tools.

- Forensic Axes: Analysis of model backbones (like Qwen2-VL) reveals that only a tiny fraction of latent features are active during detection. These are known as Forensic Axes—specific neurons or directions in the latent space that are highly selective for specific artifacts like boundary blur, lighting inconsistency, or geometric warping.

- Forensic Heatmaps: By isolating these axes, tools can generate high-fidelity heatmaps. Unlike Grad-CAM, which only shows “where” the model looked, these heatmaps show “why”—explicitly highlighting that a video was flagged due to “Color Mismatch” or “Spectral Distortion” in the latent denoising path.

| Tech Component | Primary Function | 2026 Practical Benefit |

| DistSeal | In-model latent watermarking | Tamper-proof, high-speed attribution. |

| DiffusionQC | Natural manifold outlier detection | Catches “Zero-Day” deepfakes without training on fakes. |

| Sparse Autoencoders | Identifying “Forensic Axes” | Provides human-readable reasons for a “Fake” flag. |

What defines “Acceptable Quality” in 2026?

In 2026, the industry has officially moved past the “Spaghetti Test” era. As generative video transitions from a viral novelty to a professional utility, the benchmarks for “Acceptable Quality” have evolved to focus on Human Perceptual Alignment and Causal Reasoning.

The Global-Local Image Perceptual Score (GLIPS)

By early 2026, GLIPS has replaced FID (Fréchet Inception Distance) as the authoritative metric for visual quality. While FID was prone to “metric hacking”—where models improved their scores without actually looking better to humans—GLIPS focuses on how humans actually process imagery.

- Transformer-Based Attention: GLIPS uses attention mechanisms to evaluate “Local Similarity” (fine textures, skin pores, and sharp edges).

- Maximum Mean Discrepancy (MMD): This evaluates “Global Distributional Similarity,” checking if high-level elements like lighting, shadows, and composition are mathematically consistent.

- The Interpolative Binning Scale (IBS): To make these scores useful for non-engineers, GLIPS results are mapped to the IBS, a 2026 standardized scale:

- Grade A (Photorealistic): Indistinguishable from camera-captured content.

- Grade B (High Quality): Minor flickering or soft edges; acceptable for social media.

- Grade C (Uncanny): Detectable physical violations or “dead eye” effects.

Comparing 2023 Benchmarks to 2026 Realities

The progress in three years has been categorical. What was once considered “cutting edge” in 2023 is now viewed as “AI Slop.”

| Capability | 2023 Baseline (e.g., Gen-2) | 2026 Standard (Sora 2 / Wan 2.5) |

| Object Interaction | Objects morph into hands; liquids pass through glass. | Causal Logic: Liquid splashes accurately; hands maintain five fingers. |

| Temporal Span | 3–5 seconds before drifting into chaos. | 25+ seconds with storyboard-level consistency. |

| Motion Flow | Jerky, “weightless” walking. | Fluid Kinematics: Subtle weight shifts and organic secondary motion. |

| Physics Simulation | Constant gravity violations. | Inertia & Particles: Accurate smoke, water, and cloth dynamics. |

Humanity’s Last Exam (HLE)

At the pinnacle of the testing hierarchy is Humanity’s Last Exam (HLE), developed by CAIS and Scale AI. It is designed to be the final academic benchmark before AI reaches expert-level human reasoning.

- The 2026 SOTA: In December 2025, Zoom’s Federated AI approach (using a “Z-scorer” to orchestrate multiple models) achieved a record score of 48.1% on the full-set HLE.

- Visual Reasoning: Approximately 14% of HLE questions require multimodal reasoning (interpreting complex diagrams or scientific charts). This tests the model’s ability to “think” about what it sees, rather than just identifying patterns.

- The Expert Gap: While top models are approaching the 50% mark, human subject-matter experts still score roughly 90%, highlighting the remaining gap in deep, multi-step synthesis.

2026 Quality Checklist for MLOps

| Requirement | Standard | Tooling |

| Visual Fidelity | GLIPS > 0.85 | GLIPS-rs / PyTorch-GLIPS |

| Perceptual Alignment | IBS Grade A/B | Interpolative Binning API |

| Physical Consistency | VBench-2.0 Pass | DynamicEval / VBench |

| Provenance | C2PA Verified | c2pa-rs Verify Tool |

Conclusion:

In 2026, visual AI QA relies on a “hybrid defense.” This system combines human review with forensic tools like SSTGNN and agentic judges within MLOps platforms like Vertex AI.

While AI still struggles with complex physical movements like lip-syncing, new world models are closing the gap. OpenAI Sora 2 and NVIDIA Cosmos now simulate physical environments with high accuracy. In 2026, “Acceptable Quality” is defined by commercial use; if a generated person looks natural in a news or marketing clip, it passes.

The software testing market is projected to reach USD 99 billion by 2035. The maturity of your automated QA will determine if you can scale these AI initiatives or remain in the experimental phase.

Contact us for more agentic AI consultation to build your visual QA strategy.

FAQs:

1. How do you automate quality assurance for AI-generated video?

QA is automated using “AI-as-a-Judge” systems and MLOps infrastructure to replace subjective checks with high-dimensional benchmarks. The process includes a “hybrid defense” that combines human review with forensic tools and agentic judges.

- Agentic Paradigm: The industry uses “Agent-as-a-Judge” frameworks, which are multimodal systems that actively investigate the entire generation “trajectory” of the video.

- Tooling: Agents utilize external forensic tools like SSTGNN (Spatial-Spectral-Temporal Graph Neural Network) to diagnose temporal flickering in real-time and rPPG (remote photoplethysmography) for pulse-sign detection.

- Evaluation Rubric: Professional MLOps teams use discrete, actionable categories (e.g., Fully Correct, Incomplete, Contradictory) instead of 1–10 Likert scales to establish a reliable evaluation rubric.

2. What are the best tools for detecting ‘Uncanny Valley’ effects in 2026?

Detection tools focus on simulating human discomfort and identifying subtle biological “tells” that generative models struggle to master:

- Neuro-Symbolic AI: Used to simulate human discomfort by measuring high-dimensional distance metrics like Frechet Inception Distance (FID) and Kernel Inception Distance (KID), which correlate strongly with human unease.

- Humanity Markers: Forensic platforms look for three difficult-to-master biological “tells”:

- Facial Action Unit (AU) Synchronization: To detect the “dead eye” effect (the lack of perfect orchestration between the eyes and the mouth during a smile).

- Micro-Expression Fluidity: Analyzed using CNN-LSTM architectures to detect unnatural, abrupt transitions in emotions.

- Speech-Motion Coordination (AVSFF): Analyzes the fine-grained relationship between the tongue, teeth, and jaw to ensure the audio and visual alignment is physically plausible.

3. Can AI models accurately judge the aesthetic quality of other AI models?

Yes, AI models are now used as “Agent-as-a-Judge” systems to evaluate and grade the output of generative models.

- Accuracy: State-of-the-art frameworks employ Multi-Agent Debate (using multiple agents—a critic, a defender, and an expert—to debate the output) which has reached a Kendall Tau correlation of 0.57 with human experts.

- Method: The agent observes the entire generation “trajectory” to pinpoint failures and utilizes tools (like SSTGNN) for verification.

- Best Practices: To align AI judges with human expertise, standard protocol includes Manual Ground-Truthing (human grading of a “Golden Set”), Anchor Examples (few-shot pre-graded samples), and a Chain-of-Thought (CoT) approach to create a step-by-step audit trail.

4. How do you measure motion coherence in a generative AI pipeline?

Motion Coherence (the physical logic of movement) is measured through specialized frameworks and metrics:

- Artifact Detection: The SSTGNN (Spatial-Spectral-Temporal Graph Neural Network) framework is the standard for diagnosing Temporal flickering in real-time by calculating Spatial-Temporal Differentials across video frames.

- Structural Integrity: The MoSA (Motion-coherent Structure-Appearance) framework is used during generation to eliminate “melted limbs” by first generating a sequence of 3D keypoints (the structural skeleton) that follow the laws of physics.

- Benchmarks: The VBench 2.0 suite is used for granular diagnostics:

- Temporal Flickering: Metric goal $\text{Score} > 0.95$.

- Motion Smoothness: Metric goal $\text{L1 Error} < 0.05$ (detecting jitter).

- Physical Plausibility: VBench-2.0 Pass (checking for gravity, collision, and anatomical violations).

5. What is the standard ‘Acceptable Quality’ metric for AI-generated humans in 2026?

The authoritative metric for visual quality is the Global-Local Image Perceptual Score (GLIPS), which is focused on how humans process imagery, replacing the older Fréchet Inception Distance (FID).

- Standardized Scale: GLIPS results are mapped to the Interpolative Binning Scale (IBS), the standardized scale for non-engineers:

- Grade A (Photorealistic): Indistinguishable from camera-captured content.

- Grade B (High Quality): Minor flickering or soft edges; acceptable for social media.

- Grade C (Uncanny): Detectable physical violations or “dead eye” effects.

- Bottom Line: Ultimately, “Acceptable Quality” is defined by commercial use: if a generated person looks natural and is fit for purpose (e.g., in a news or marketing clip), it passes.